Ship AI you can trust.

Secure, accelerate, and monitor every move your AI makes in one secure platform.

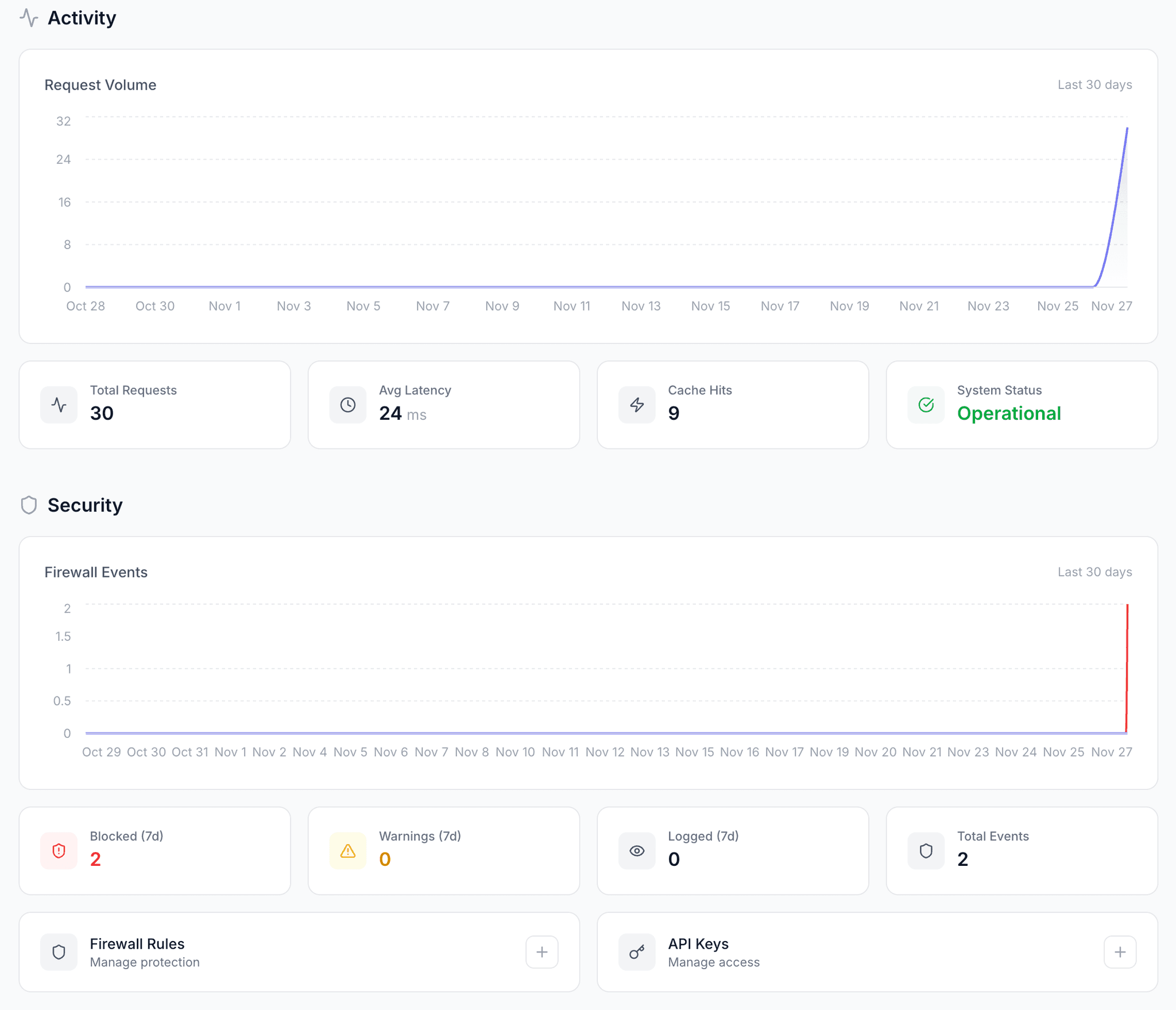

Activity

Request Volume

Last 30 days

Total Requests

1.2M

Avg Latency

23 ms

Cache Hits

847K

System Status

Operational

Security

Firewall Events

Last 30 days

Blocked (7d)

124

Warnings (7d)

89

Logged (7d)

1.4K

Total Events

1.6K

Firewall Rules

Manage protection

API Keys

Manage access

Recent Requests

View allThe Control Plane for AI

Get security, caching, observability and more without changing a single line of business logic by routing your AI traffic through Raptor.

Meet Raptor

Smart Proxy Built for AI

Every feature in Raptor works together - the intelligent layer that understands your traffic, protects your data, and delivers insights in real-time.

Threat Detection

Block attacks before they reach your LLM

Automatically detects prompt injections, jailbreaks, and malicious inputs. Highlights threats in real-time with detailed explanations.

Semantic Cache

Similar prompts, instant responses

Uses embedding-based similarity to cache responses - not just exact matches. Reduces API costs by 50%+ with sub-millisecond lookups.

Request Analytics

Every request, fully visible

See latency breakdowns, token usage, and cost per request. Understand exactly how your AI is being used across your application.

Custom Rules Engine

Your security, your rules

Define regex patterns, keyword filters, and semantic rules. Block specific topics, enforce output formats, or redact sensitive data.

How it works

Raptor connects to your LLM provider and turns every request into a secure, cached, and observable transaction - built for production AI.

01

Connect Your LLM Provider

Link Raptor to your OpenAI, Anthropic, or other LLM provider. It securely proxies your requests - so all AI traffic flows through a single, protected endpoint.

02

Configure Your Rules

Raptor indexes and analyzes your traffic in real-time - detecting threats, caching responses, and logging everything. Every request becomes observable and protected.

03

Monitor & Optimize

Ask Raptor anything about your AI usage - from blocked threats to cost breakdowns. Get instant insights, visual dashboards, and smart alerts without changing your code.

// Before

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

});

const response = await openai.chat.completions.create({

model: "gpt-5",

messages: [{ role: "user", content: prompt }]

});

// After - just change the base URL

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

baseURL: "https://proxy.raptordata.dev/",

defaultHeaders: {

"X-Raptor-Api-Key": process.env.RAPTOR_API_KEY

}

});Pricing

Pricing that grows with you

All plans include full access to security, caching, and analytics. Start free and scale as you grow.

Free

Everything you need to get started with AI security.

- 10,000 requests/month

- ML-powered threat detection

- Semantic caching

- Request analytics & tracing

- Custom rules engine

- API dashboard

- Community support

Pro

Most popularFor growing teams that need more capacity.

- 500,000 requests/month

- ML-powered threat detection

- Semantic caching

- Request analytics & tracing

- Custom rules engine

- API dashboard

- Priority email support

Enterprise

Dedicated support and compliance for your organization.

- Unlimited requests

- ML-powered threat detection

- Semantic caching

- Request analytics & tracing

- Custom rules engine

- API dashboard

- SLA guarantees

- Data residency options

- Dedicated support

- Custom integrations

Unlock Smarter

AI Workflows

Start optimizing security, caching, and insights before anyone else